“This is awesome!” Jenny, the Product Manager exclaims to the team. “We’ve been having some challenges retaining customers, and I think we’ve got just the right idea of how to fix it! It’ll require a little retooling of our sign-up flow, but I think the impact will be incredible.”

Great ideas are some of the most powerful accelerants there are. Lighting one off produces a great burst of energy, light and acceleration. But like great accelerants, it burns out very quickly if it doesn’t have clear direction and sustaining energy. Ideas in software can be especially fast-burning as we try to convert an idea into actual, working software. Not only do we have the idea itself to contend with, we have to integrate it into systems that probably weren’t conceived with the notion of this incredible new idea. That can lead to friction, bugs, and downright failure to launch.

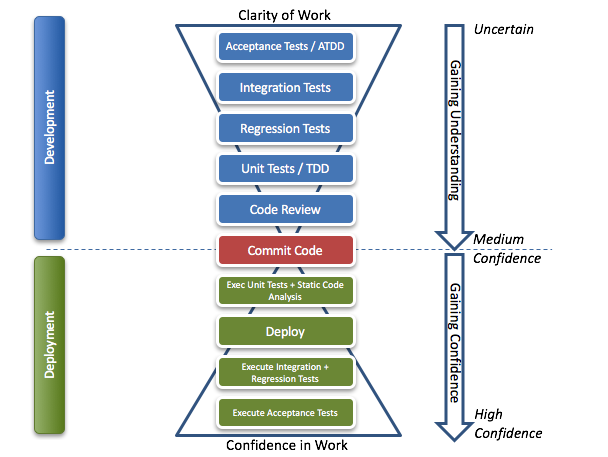

Having worked with many teams like Jenny’s, there’s a common flow I’ve begun sharing around the notion of how we can take ideas in all their uncertainty and get them to production with a high level of confidence. This isn’t a new model by any means, but highlights where steps should be at and a flow that has worked well with teams I coach.

We start with a wide interpretation of what the idea is. We take steps during development to focus that interpretation into a clear, releasable chunk. Once we’ve committed the feature, we have a clear of idea of what we wanted to build, but not a high confidence that is working as we intended. So the second part of the diagram moves us to that high level of confidence by using the work we did to gain understanding and clarity to also gain confidence.

Let’s dig a little more into these steps.

Development Cycle

At the top, we start with an idea. We write Acceptance Tests to capture the high-level goals. This is ideally automated (using something like Cucumber or FitNesse), but can also include things like “We expect to see a 3% increase in conversions”. The point here is to develop concrete, measurable business objectives and goals for the outcome of the feature.

As we’ve begun capturing our acceptance tests, the team starts looking at how things integrate into the system, using Integration Tests. Here we think about how the feature will be put into the system, and write tests to capture what will happen once the feature has been integrated. These will not be passing, because the feature hasn’t been written yet, but will begin letting the team think through the integration questions and challenges.

Similarly, if we’re integrating into an existing system, we may need a series of Regression Tests to capture how the system works now to make sure it continues functioning as expected. For example, if we’re adding a new module to accept gift cards, we may need regression tests around calculating tax, or total costs when there is no gift card. This gains us confidence that the new feature won’t have unintended side effects.

In parallel, the developers will start writing Unit Tests (ideally using Test-First Development) to capture the behavior and functionality of the code they want to write. These tests are focused at a low-level of functionality, and help drive out the design of the actual feature. These are paired with writing the code for the feature itself.

Finally, many teams use a Code Review process to look over the feature. This could be done in real-time using Pair Programming, or through a Pull Request process, or just simply emailing team members. The goal here is to think through the logic from an outsider’s perspective, and also give information about how it integrates with the system.

With our tests written, our design reviewed, and our clarity sharpened, we commit our code and begin our deployment cycle.

Deployment Cycle

Throughout Development, we write tests at various levels not just for testing purposes, but to drive out the understanding of how the code functions and integrates into the system. But at some point we need confidence that we’ve done what we needed.

So once code has been committed, it begins a workflow of gaining confidence. Immediately after code is checked in, the unit tests are run. This gives fast feedback about whether we’ve broken anything, or impacted code quality. We can also run Static Code Analysis, Security scans or other tools to make sure we’re following our team’s standards.

If our unit tests pass, we can now start digging deeper into the viability of our code. We start by deploying it – ideally to a test or staging server. These servers will have the data and access to run scenarios. We can then execute our integration and regression tests, ensuring our system is functioning as expected, and finally executing our acceptance tests to make sure we’re meeting the business needs of the feature. If all of these pass, we’ve widened our confidence in the feature.

Cycles are actually continuous

So while this is laid out as a single workflow, the idea is to use automation and close collaboration to keep these cycles continually flowing. Being able to know within 10 minutes that a change a pair made to the code impacted conversion rates is a life-changing thing for many teams, allowing them to respond very quickly to the needs of the code – and the business.

1 thought on “From Clarity to Confidence: A Flow of Software Development”

Comments are closed.